OSHMPI: OpenSHMEM Implementation over MPI

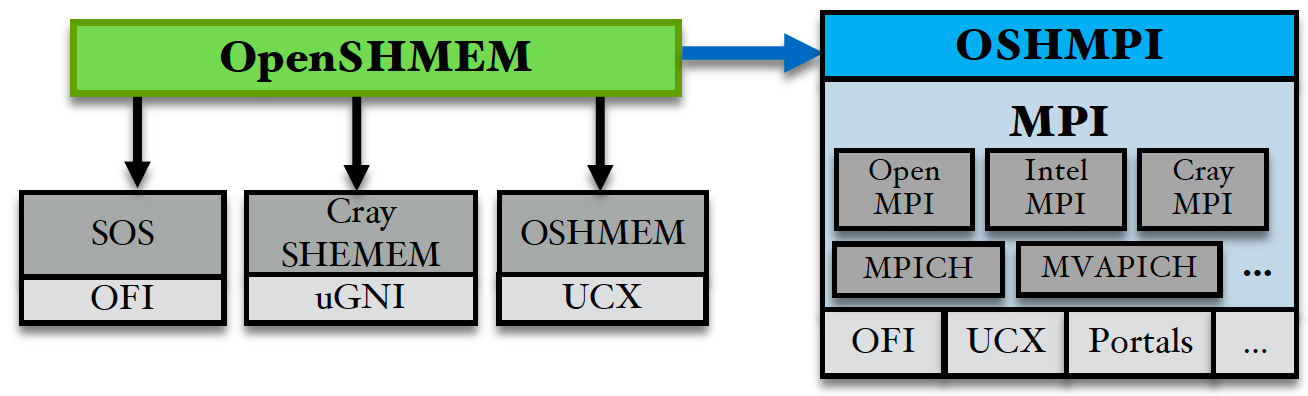

OpenSHMEM is an increasingly popular standard of the SHMEM programming model. There are many different OpenSHMEM implementations such as Sandia OpenSHMEM, OSHMEM in Open MPI, Cray SHMEM etc. Many of these implementations utilize only one or a few network providers and platforms.

On the other hand, MPI has been the de facto standard for parallel programming for more than two decades. It is provided on a wide range of platforms and has been widely used by the scientific computing community. The OSHMPI project aims to explore the opportunities to leverage MPI's portability for supporting OpenSHMEM communication and investigate optimizations to pursue efficient performance similar to that of native OpenSHMEM implementations.

Key Features

- Full support of OpenSHMEM specification 1.4.

- Function inline for all OSHMPI internal routines.

- Caching internal MPI communicators for collective operations with PE active set.

- OpenSHMEM multithreading safety support.

- Active message support of OpenSHMEM atomic operations. MPI accumulates cannot be directly used because MPI does not guarantee atomicity between different reduce operations.

- Optional asynchronous progress thread support for active message based atomic.

Portability Showcase

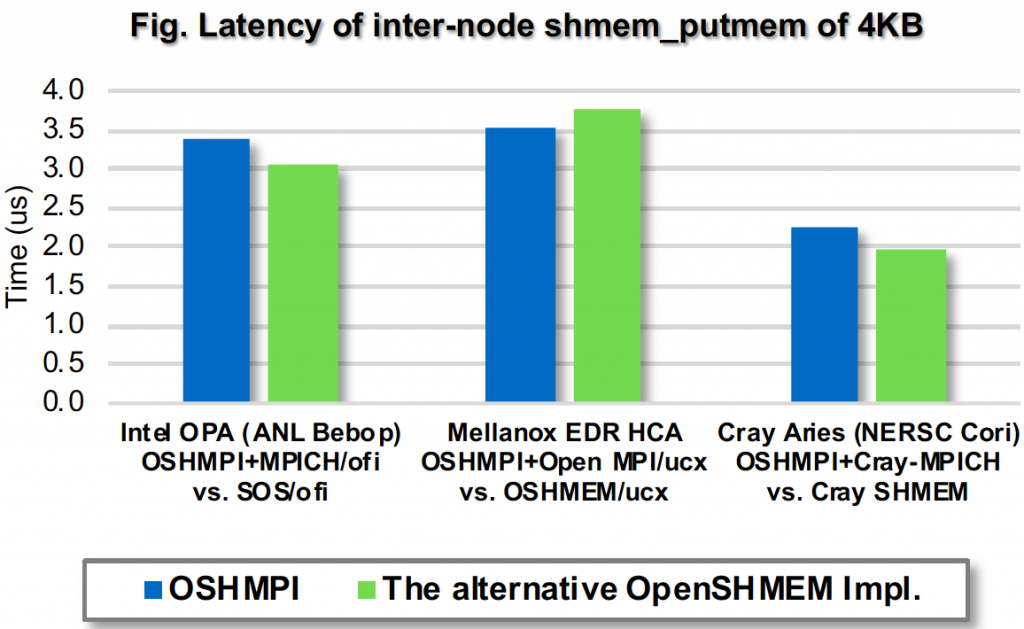

- Figure below compares OSHMPI against various OpenSHMEM implementations on their respective target platforms.

Performance Showcase

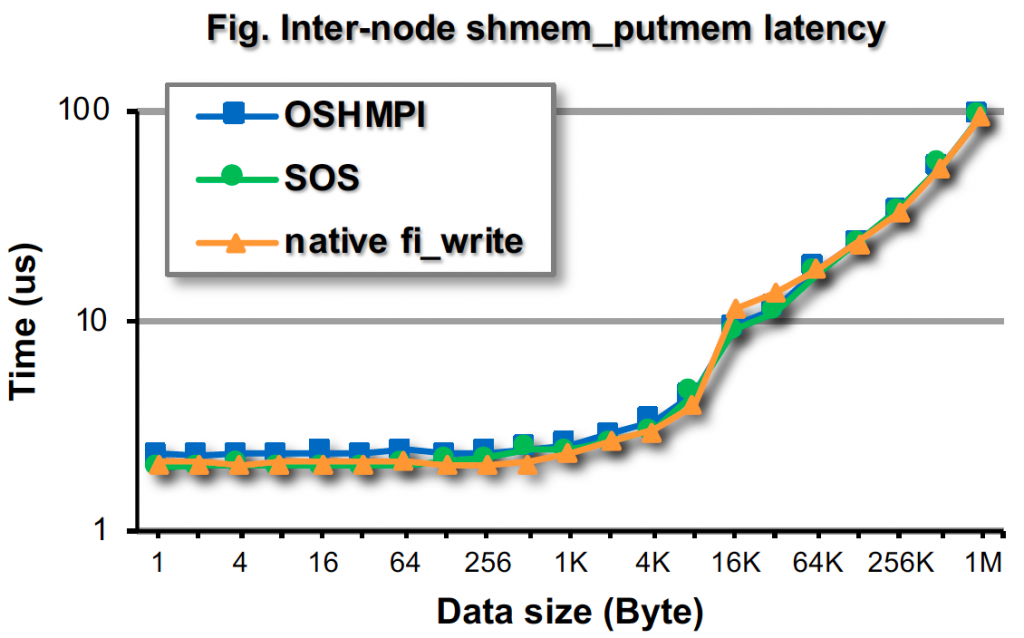

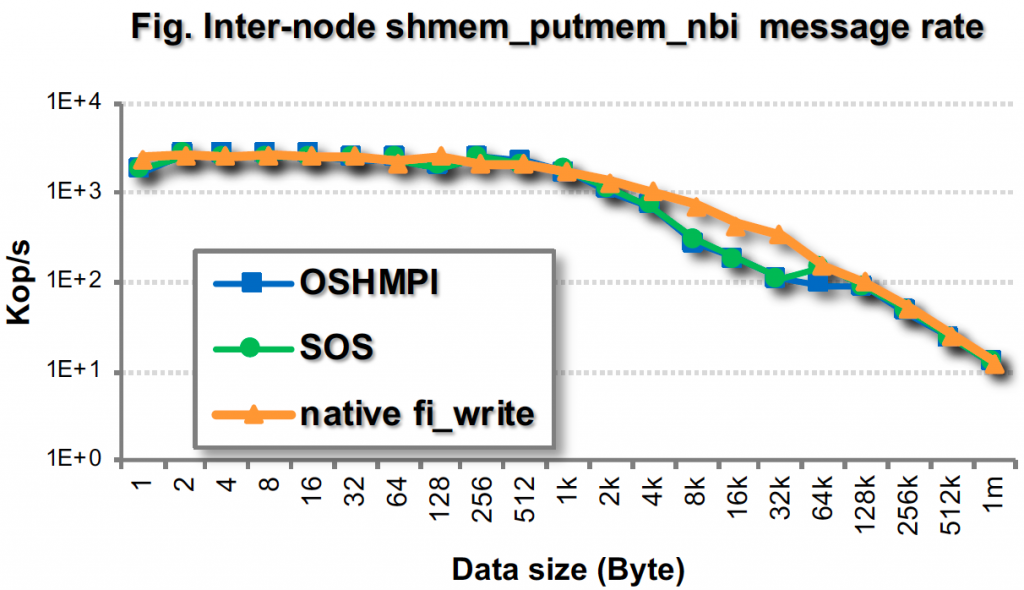

- OSHMPI achieves comparable latency and message rate to Sandia OpenSHMEM (SOS) using OFI/psm2 on an Intel Omni-path cluster.

- OSHMPI is also close to the pure communication performance which is shown by using only the native OFI write/read operations.